The Business Cost of Inadequate Testing and Verification

The Business Cost of Inadequate Testing and Verification

Inadequate Testing Priorities: The Hidden Business Risk in Product Development

In product development, testing and verification are often praised in principle but underfunded in practice. Organizations regularly declare quality as a core value, yet budget allocations and schedule pressures reveal a different reality. I am grateful to LinkedIn for providing the many prompts that catalyze these blog posts.

Inadequate Testing Priorities quietly undermine product reliability, customer trust, and long-term profitability. When leadership treats testing as a downstream activity rather than a strategic investment, the consequences accumulate—defects increase, recalls rise, and reputational damage follows.

Testing is not an expense line item to trim under pressure; it is risk management in action. Cutting the budget often increases the risk to system design and quality.

What is the cost of avoiding a potential quality problem?

Testing and Verification in Product Development: Why It Matters

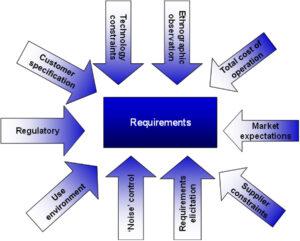

Testing and verification confirm that a system:

-

Meets requirements

-

Performs reliably under expected conditions

-

Behaves safely under edge and failure scenarios

-

Aligns with regulatory and compliance standards

Verification answers: Did we build the product right?

Validation answers: Did we build the right product?

When Inadequate Testing Priorities dominate decision-making, these questions go unanswered until customers discover the gaps.

Five Approaches to Product Testing That Strengthen Verification

If businesses are serious about avoiding Inadequate Testing Priorities, they must move beyond a single-method testing mindset. As outlined in Five Approaches to Product Testing, robust verification requires multiple complementary methods.

No single approach is sufficient. Each uncovers different classes of risk.

1. Compliance Testing (Requirements and Standards Verification)

Compliance testing ver ifies that the product meets documented requirements, contractual obligations, and regulatory standards.

ifies that the product meets documented requirements, contractual obligations, and regulatory standards.

This is the baseline — the minimum acceptable level of testing.

It depends heavily on:

-

Clear requirements

-

Controlled revisions

-

Strong configuration management

-

Traceability from requirement to test case

When release notes are missing or documentation is poorly maintained, compliance testing becomes unreliable, reinforcing the dangers of Inadequate Testing Priorities.

2. Combinatorial Testing (Interaction-Based Risk Discovery)

Combinatorial testing systematically evaluates interactions between multiple variables. Of course, this is true; in the real world, the product is inundated with stimuli that can impact, for example, high bus load and product performance (function latency).

Products rarely fail due to a single variable. They fail because:

-

Two or more parameters interact unexpectedly

-

Environmental and functional demands overlap

-

Hardware and software behaviors combine under load

This approach is especially critical in complex systems where simulation alone cannot reveal interaction effects.

When organizations rely only on nominal test cases, they miss these compounded risks.

3. Stochastic and Exploratory Testing (Managing Uncertainty)

Stochastic testing introduces randomness into test sequences. Exploratory testing relies on expert intuition and unscripted evaluation. Testing the same sequence does not reveal module-parameter interactions that may cause errors.

These approaches:

-

Mimic unpredictable real-world behavior

-

Reveal sequence-sensitive failures

-

Surface latent defects

This is particularly important when software state transitions matter—and when strict scripted testing would never reproduce the failure condition.

Overreliance on deterministic simulation without stochastic confirmation is a classic symptom of Inadequate Testing Priorities.

4. Extreme Testing (Beyond the Specified Limits)

Extreme testing pushes products beyond defined standards and operational ranges.

It answers critical questions:

-

Where does the product actually fail?

-

What is the failure mode?

-

What margin exists between specification and breakdown?

Standards define expected use. Reality often exceeds those boundaries.

Organizations that test only to the minimum specification are performing contractual verification, not risk mitigation.

5. Attack Testing (Security and Adversarial Evaluation)

Attack testing subjects products to intentional malicious activity to uncover vulnerabilities.

This includes:

-

Penetration testing

-

Vulnerability exploitation

-

Social engineering simulation

-

Security mechanism validation

In interconnected systems, ignoring attack testing exposes organizations to reputational, regulatory, and financial disaster.

Cyber risk is product risk.

Budget Allocation for Testing: What Companies Say vs. What They Fund

In many industries—software, automotive, aerospace, medical devices—quality assurance and testing budgets typically range:

-

Recommended: 25–40% of total development budget

-

Common Actual Allocation: 10–20%

-

In distressed programs: Less than 10%

For example, in a $20 million product development effort:

-

Recommended testing budget: $5–8 million

-

Frequently allocated: $2–3 million

That shortfall forces trade-offs:

-

Reduced regression testing

-

Deferred system integration testing

-

Limited environmental or stress testing

-

Compressed test cycles

These trade-offs reflect Inadequate Testing Priorities, not engineering limitations. From experience, this is a common failure mode.

Overreliance on Simulation Without Physical Confirmation

The Growing Dependence on Digital Models

Simulation tools have dramatically improved design speed and analytical insight. Finite element models, digital twins, system dynamics simulations, and AI-based predictive models allow early design exploration.

However, simulation is not validation. The simulation itself will require some degree of verification. Are the models accurate? Do we have the relevant parameters and their interactions that conform to the real-world range of values? Unconfirmed models may lead us down the proverbial primrose path. The illusion of risk reduction – not risk reduction.

The Risk of Unverified Models

Overreliance on simulation becomes dangerous when:

-

Model assumptions are not challenged

-

Input parameters lack empirical validation

-

Boundary conditions are unrealistic

-

No physical testing confirms model predictions

Simulation outputs are only as reliable as the assumptions embedded in them.

When organizations substitute simulation for testing—rather than using it to inform testing—they amplify risk. The absence of correlated physical testing prevents model calibration. Errors compound quietly until real-world failures expose them.

This is a hallmark of Inadequate Testing Priorities—mistaking computational efficiency for empirical truth.

The False Economy of Skipping Physical Testing

The short-term savings gained by skipping hardware or system-level testing often lead to:

-

Field failures

-

Warranty claims

-

Safety incidents

-

Litigation

-

Emergency redesign costs

Testing confirms or refutes simulation models. Without confirmation, models become beliefs.

Delayed Product and System Availability: The Compression Effect

Testing depends on product availability. When hardware, firmware, or integrated systems arrive late:

-

Test windows shrink

-

Teams compress test cycles

-

Corners are cut

-

Known issues are accepted as “manageable risks”

Instead of extending schedules to protect quality, leadership often compresses testing to protect launch dates.

The impact includes:

-

Reduced coverage of edge cases

-

Insufficient stress and durability testing

-

Deferred validation to post-launch updates

Delayed availability creates cascading risk. Testing becomes reactive rather than preventative.

Poor Configuration Management and Documentation Failures

Effective testing requires focus, and release notes provide that focus. In practice, a lack often leads to testing of features that are not included, resulting in wasted time and effort. We cannot overstate the importance of configuration management.

Lack of Release Notes

Without clear release notes:

-

Test teams do not know what changed or what is included in the iteration

-

Regression scope becomes unclear

-

Traceability is lost

-

Known issues reappear

Release notes are not administrative artifacts—they are quality control instruments.

Missing or Incomplete Product Documentation

When product documentation is weak or absent:

-

Requirements traceability breaks down

-

Verification matrices cannot be completed

-

Field support struggles to diagnose issues

-

Compliance risks increase

Testing relies on defined baselines. Poor configuration management creates ambiguity about what is being tested.

The Configuration Chaos Loop

Poor configuration management leads to:

-

Unclear product baselines

- Unfocused testing of iterations, poor test preparation

-

Inefficient or misaligned testing

-

Undetected defects

-

Rework

-

Increased cost and schedule pressure

This cycle reinforces Inadequate Testing Priorities, where speed is valued over disciplined engineering controls.

The True Cost of Inadequate Testing Priorities

Short-term savings become long-term liabilities:

-

Increased defect escape rates

-

Customer dissatisfaction

-

Brand erosion

-

Safety recalls

-

Regulatory penalties

-

Team burnout

Studies across industries consistently show that fixing a defect post-release can cost 10x to 100x more than correcting it during early verification stages.

Testing is not merely technical due diligence—it is financial risk mitigation.

Reframing Testing as Strategic Risk Management

Organizations that prioritize testing:

-

Integrate test planning at project inception

-

Allocate 25–40% of the development budget to quality and verification

-

Use simulation to guide—not replace—physical testing

-

Protect test schedules from compression

-

Maintain disciplined configuration management

-

Require robust release notes and documentation

These companies treat testing as an investment in the probability of success, not as overhead.

Leadership Determines Testing Culture

Testing culture reflects leadership priorities. If executives consistently compress test schedules, underfund quality, and celebrate on-time launches regardless of defect rates, the organization internalizes those signals.

Conversely, when leadership protects verification budgets and demands empirical confirmation of models, quality becomes embedded in the system.

Inadequate Testing Priorities are not accidental—they are strategic decisions.

And they carry predictable consequences.

For more information, contact us:

The Value Transformation LLC store.

Follow us on social media at:

Amazon Author Central https://www.amazon.com/-/e/B002A56N5E

Follow us on LinkedIn: https://www.linkedin.com/in/jonmquigley/

https://www.linkedin.com/company/value-transformation-llc

Follow us on Google Scholar: https://scholar.google.com/citations?user=dAApL1kAAAAJ