Agile Practices Applied to Line Management – Monitor

Situation Review

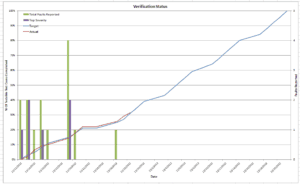

From our earlier blog post, we have a road map that resembles an agile burn down (inverse) chart for our expected rate of test case accomplishment. We are tracking our actual rate of accomplishment toward this expected rate. These only help us understand the rate of accomplishment and an expected conclusion date of the test case execution. This does not inform us in any way about the quality of the product and the system, but we have a way of adding that.

Defect Arrival

As we execute the test cases, we will likely find failures. These failures or faults will be reported into a reporting system that will allow us to track the failure resolution. We can also use that here in our progress tracking sheet. Ultimately we want to learn two things – one is the expected conclusion date of the testing, and most important predict (in as much as that is possible) the quality of the system / product that is being tested. We can do this by adding a second scale to the progress tracking for the defect or fault reporting.

“Sprint” Meeting

Each morning, the team assembles in a meeting. This is a short meeting that follows and agile protocol. The scrum master (line manager) asks the three questions of the team. The team individually responds, reporting any problem with their ability to execute as well as any severe defects. Those defects are discussed and subsequently entered into the fault tracking system. The number of test cases executed are also discussed to assess rate of progress. The quick meeting is dismissed, and the team updates their respective portions of the tracking sheet that records the progress being made. The scrum master (line manager) will compile and add the defect arrival rate to the rate graphic. This documentation (illustrated and discussed below) will be delivered daily to the conventionally executed project.

All About the Metrics

The graphic below illustrates the number of faults reported for each day’s worth of work. In our testing we have a four-tiered fault points. Not all faults generate the same response. Some are minor blemishes that may not be much of an issue. Some are serious performance issues in which a customer will not be happy. Some can be catastrophic that are breach regulations or cause safety concerns. For this reason, we break down the faults in a way that provides us with a snapshot of the defect arrival rate by severity and are able to make some statements about the system or product.

In the above example, we can see that after about 30% of the work accomplished, we see 14 faults reported. Of those 14 faults reported, we see 6 of them are serious and require attention. Yet we are only 30% of the way through our verification. What can we glean from that?

Well, one thing we can surmise – is that the remaining 70% will almost assuredly have problems and some of those problems will likely be of the top severity. Knowing this allows our project management function to begin adjusting expectations of the stakeholders. Tracking this over iterations informs the project manager and key stakeholders how the product is progressing.

Summary

With some creativity, line managers can employ the tactics of agile to improve throughput and enable clear articulation of testing progress. If your organization employs conventional project management techniques, there are still ways to benefit from agile concepts. It is possible to use the concept even in a conventional project for example. In this case, the testing for this project is treated as a sub-project and the planning and monitoring and control show a close resemblance to agile techniques. There were many iterations of the system in this project and these tools helped the speed and articulation of the progress beyond the immediate team.

Test cases may not be the “be all end all” of the verification world. To be sure there are tests that are not documented as test case against requirements such as exploratory testing. However, neither are test cases against requirements trivial. We can learn much about the product by a diligent and methodical approach to the testing and one element of that approach is the test case.